How to Manage Multiple OpenShift Clusters with RHACM — Hybrid Cloud

Hybrid cloud is an IT architecture that incorporates some degree of workload portability, orchestration, and management across 2 or more environments — https://www.redhat.com/en/topics/cloud-computing/what-is-hybrid-cloud

Hybrid cloud is a term that I hear more and more over the days. Sometimes people use it as a buzz-word to attract customers or to sound cool, and sometimes people actually implement abstract workload on multiple environments, turning their platforms into hybrid ecosystems. Either way, it seems like going for a hybrid environment is a fundamental decision these days.

Everybody’s doing it, everybody is going hybrid. But, why?

- Let’s think about public cloud providers like — Azure, AWS, GCP or even IBM. Each one of these cloud providers is similar, but you might want to deploy a storage intensive application on AWS because of better object storage performance, and maybe you’d like to deploy your highly confidential application on GCP because of better security features. This way you are choosing a cloud provider based on the added value it gives. Going hybrid means that you are adapting a platform to your application, and not your application to a platform.

- Since it is so easy to deploy platforms that manage your workload nowadays (eg Kubernetes), there is no real reason to rely on a single platform for all of your workload. Let’s say that an organization has a huge Kubernetes cluster, with thousands of worker nodes, the organization implements and manages a huge workload on this cluster. Hypothetically, if the organization’s infrastructure has a bad day, and the cluster’s control-plane goes down, the whole environment is going to be affected by the failure. If the organization splits the load into different isolated, hybrid environments that do not affect each other, a failure in one environment would not affect the rest of the load.

OpenShift Multi-Clustering

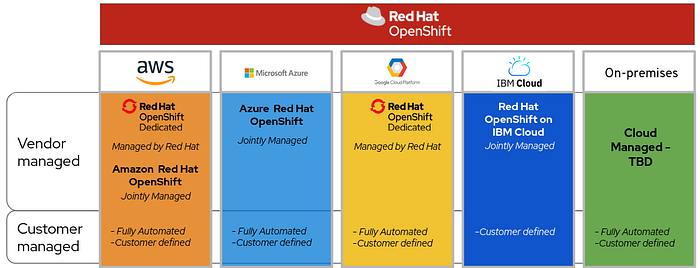

Since I’m working for Red Hat, I think that OpenShift is the first platform that comes into my mind when I’m speaking about hybrid cloud. OpenShift has adapted to run on basically every popular cloud providers with no issues, and it combines a very simple deployment process — “With a click of a button”.

OpenShift became a very comfortable environment to run on, it’s reliable, it’s easy to learn and easy to develop on, therefore, organizations started deploying multiple clusters in their environments for various reasons —

- Clusters for prod environments.

- Clusters for non-prod and testing environments.

- Clusters for certain features in the organization’s load (eg GPU).

- Clusters that will be at an edge environment.

The growth of clusters per organization had been substantial. But, it turned out that there is a huge difference between having one cluster or having 100 clusters in terms of management, security and maintenance. When you are managing one cluster with one set of applications, one set of security settings and one set of management configurations, you can do everything using a single management point (either the OpenShift console, the CLI or API). But, managing 100 clusters is a whole new different level.

There are so many things to consider when you are managing a large amount of clusters. Whether its configuration drifts between the clusters, or application settings that change in one cluster, but remain in the rest of them. And, don’t forget about security! The larger the environment gets, the bigger the security threats that come with it.

Introducing — Red Hat Advanced Cluster Management — RHACM

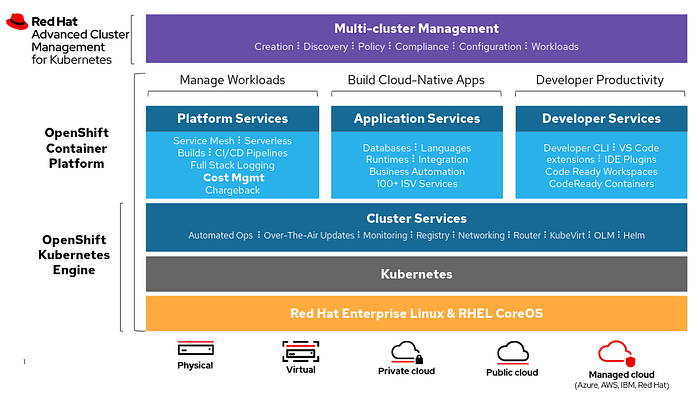

RHACM was made to solve the multi-cluster management issue. Using RHACM, you will be able to manage multiple OpenShift clusters, enforce policies, create rules, visualize applications and manage workflow in the environment.

Red Hat Advanced Cluster Management Architecture

Deploying RHACM requires a whole OpenShift cluster that will act as a HUB. The purpose of the HUB OpenShift cluster is to run RHACM and to manage other clusters in the environment. The clusters that the HUB manages are called Managed Clusters. The managed clusters are enrolled into the HUB, or created using the HUB itself!

RHACM Demo

Lets get practical! In this demo I will go through the main RHACM features and concepts -

- Install Red Hat Advanced Cluster Management.

- Import an OpenShift cluster from AWS into the HUB management portal.

- Deploy a simple application onto the managed cluster using the HUB.

- Configure management rules and security policies for the managed cluster using the HUB.

Installing RHACM

The RHACM Hub OpenShift cluster that will be used in this demo characteristics —

- OpenShift 4.6

- 3 Workers (4CPU x 16GB)

- 3 Master (4CPU x 16GB)

- Connected to the internet

Note that the minimum version for an OpenShift cluster that supports RHACM is OCP 4.3.

The OpenShift cluster created will only be used as a HUB cluster. No other applications will be installed on it.

Installation steps —

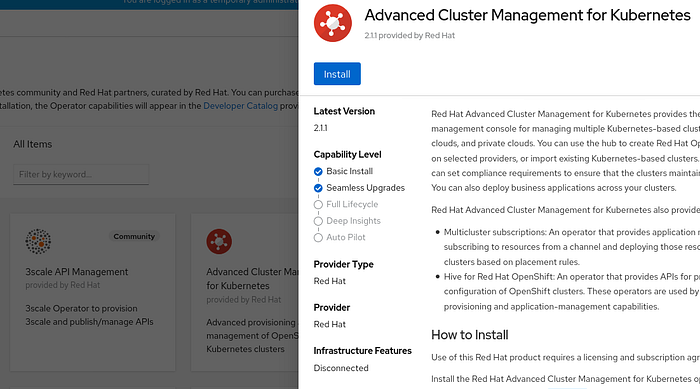

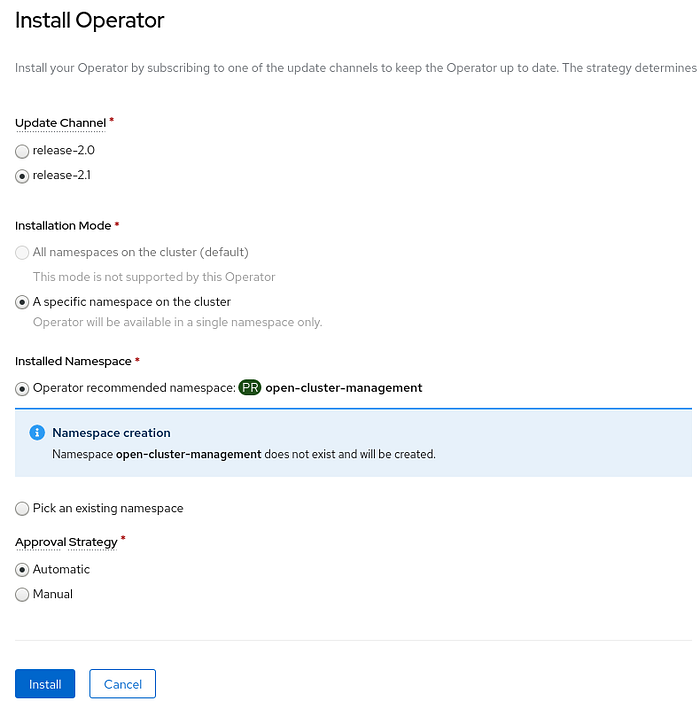

- Login into the OpenShift HUB cluster using the web console and navigate to Operators →Operator Hub.

- Search for ‘Advanced Cluster Management for Kubernetes’. Take note of the version of the operator, and click on ‘Install’.

- Install the operator under the default namespace provided by the operator — ‘open-cluster-management’.

NOTE: RHACM will create namespaces called local-cluster, open-cluster-management-agent and open-cluster-management-agent-addon for managing itself in the RHACM management stack. Make sure that there are no namespaces with these names before deploying the operator.

- Using the OpenShift web console navigate to Operators →Installed Operators →Advanced Cluster Management for Kubernetes →MultiClusterHub, And click on ‘Create MultiClusterHub’. In here, you will be able to create the HUB definition itself.

- In this demo, we will not configure any special configurations. We will use the default values for the MultiClusterHub object definition and press on Create. Customizable parameters can be found in the official documentation.

- Open a terminal, and using the ‘oc’ command login to your OpenShift cluster, and list the pods in the open-cluster-management namespace.

<hub cluster console> $ watch -n 1 oc get pods -n open-cluster-management- When all pods get into a ‘Running’ state, get the RHACM route so you will be able to log into the RHACM console —

<hub cluster console> $ oc get route -n open-cluster-management

NAME HOST/PORT PATH SERVICES PORT TERMINATION WILDCARD

multicloud-console multicloud-console.apps.<cluster-name>.<domain> management-ingress <all> passthrough/Redirect None- Log into RHACM using the route. The credentials you’ll need to use are the same as the credentials you used to log into the OpenShift cluster.

Importing a Cluster into RHACM

To import an existing OpenShift cluster into RHACM you will have to log into your RHACM web administration portal and navigate to Cluster lifecycle > Add cluster > Import an existing cluster.

Fill the data regarding your Managed Cluster -

- Cluster Name — A representation for the imported cluster in RHACM.

- Cloud — The name of the cloud provider.

- Environment — A label which will be used to identify the cluster’s environment. Usually will be: prod, dev or qa.

- Additional labels — Any additional label you’d like to apply to the cluster.

RHACM uses labels to apply fine grained policies onto the Managed Clusters. For example, if you’d like to apply a restrictive resource policy on production clusters, you would create a policy and associate it with the environment=prod label.

Press on Generate command — Copy the generated command, and run it on your Managed Cluster -

<managed cluster console>$ echo Ci0tLQ... | base64 --decode | kubectl apply -f -customresourcedefinition.apiextensions.k8s.io/klusterlets.operator.open-cluster-management.io created

clusterrole.rbac.authorization.k8s.io/klusterlet created

clusterrole.rbac.authorization.k8s.io/open-cluster-management:klusterlet-admin-aggregate-clusterrole created

clusterrolebinding.rbac.authorization.k8s.io/klusterlet created

namespace/open-cluster-management-agent created

secret/bootstrap-hub-kubeconfig created

serviceaccount/klusterlet created

deployment.apps/klusterlet created

klusterlet.operator.open-cluster-management.io/klusterlet created

Validate that all of the ACM agent pods are active on the Managed Cluster -

<managed cluster console>$ oc get pod -n open-cluster-management-agent

NAME READY STATUS RESTARTS AGE

klusterlet 1/1 Running 0 5m44s

klusterlet-registration-agent 1/1 Running 0 5m32s

klusterlet-registration-agent 1/1 Running 0 5m32s

klusterlet-registration-agent 1/1 Running 0 5m32s

klusterlet-work-agent 1/1 Running 0 5m32s

klusterlet-work-agent 1/1 Running 1 5m32s

klusterlet-work-agent 1/1 Running 0 5m32sYour Managed Cluster is now imported into the RHACM HUB -

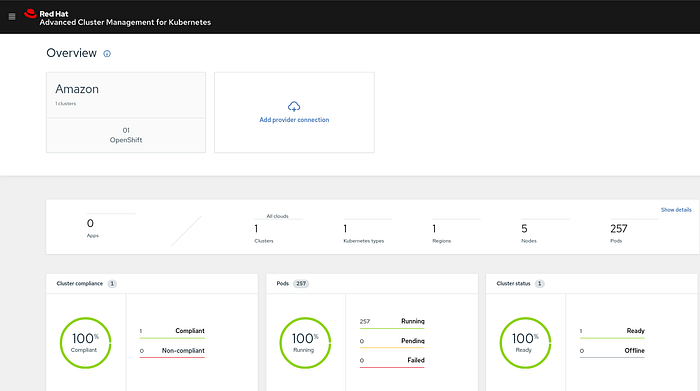

As you can see, we have successfully imported a cluster into the HUB server. It automatically identified that the cluster is based in AWS, that it has 257 running pods and that 5 nodes make up the cluster. For further diagnostics and information it is possible to press on the Show details button.

Deploying an Application using RHACM

Now that we have a managed cluster in our RHACM hub, we can deploy an application on this cluster using the RHACM hub! Application deployment using RHACM implements the “Infrastructure as Code” methodology, together with the GitOps strategy.

GitOps in short is a set of practices to use Git pull requests to manage infrastructure and application configurations. Git repository in GitOps is considered the only source of truth and contains the entire state of the system so that the trail of changes to the system state are visible and auditable. — openshift.com

In our case, we will have to prepare Kubernetes resources that represent our application in advance, and put them in a Git repository. RHACM HUB will use these resources to create the desired application architecture in its managed clusters.

In my example, I will be deploying a MariaDB database on my managed cluster. I will create two resources, One resource for the DeploymentConfig that will hold my deployment strategy for the database itself, and another resource that will hold the secret for the ROOT user of the database.

To deploy the database resources on the managed cluster, some CRs (custom resources) need to be created on the hub cluster -

- Namespace — There is a need for a namespace per application deployed by the Hub Openshift cluster. The namespace will contain the resources that represent the application in the managed clusters.

- Channel — The Channel resource represents the Git repository on which the application’s Kubernetes resources are on.

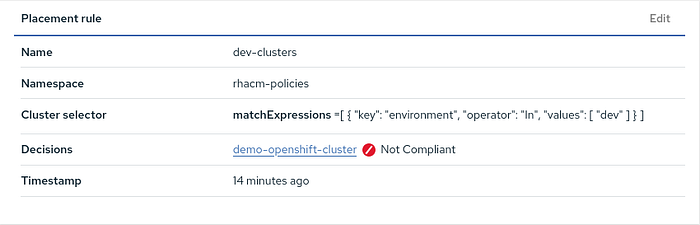

- PlacementRule — The PlacementRule resource represents the clusters on which the application will be deployed. The resource uses selectors to filter through the clusters and choose relevant clusters based on their tags (just like how services filter traffic through pods).

- Subscription — The Subscription resource is used as a connecting resource between the Channel and the PlacementRule resources. It basically manages which Kubernetes resources will be deployed on which clusters.

- Application — The Application resource is used to group the rest of the resources into a single management view of the application itself. The application resource will reference the Subscription resource.

All of the CRs need to be created on the hub cluster in order to successfully deploy an application on the managed cluster. In this example, I will be using my preconfigured GitLab repository to deploy these resources.

Using the GitLab repository, I will create all of the resources mentioned above using a single YAML file on the HUB OpenShift cluster -

<hub cluster console>$ git clone https://gitlab.com/michael.kot/rhacm-demo.git<hub cluster console>$ oc create -f ./rhacm-demo/rhacm-resources/application.yml

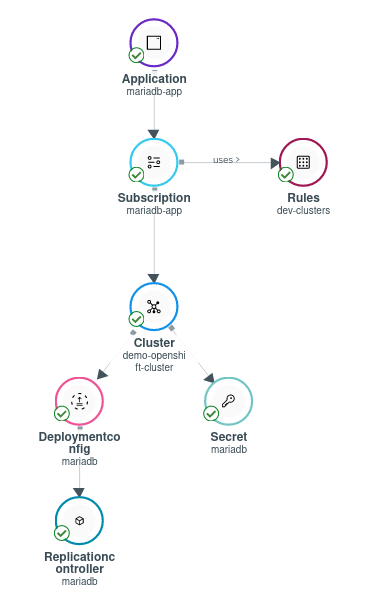

Now that all of the resources are created, I can log into RHACM’s UI and go to the ‘Application lifecycle’ tab. Over there, I can see that I have one application deployed — ‘mariadb-app’. I can see that the application has -

- 1 Subscription resource associated with it.

- 1 managed cluster that runs the application.

- 1 Pod that runs the load itself — The MariaDB server.

RHACM presents a clear view of the resources created. You can see the resources created on the Hub cluster alongside their references — Application, Subscription and PlacementRules. You can also see Resources that were created for the application itself, which runs on the Managed cluster — DeploymentConfig, ReplicationController and a Secret.

Let’s take a look at the Managed cluster. Log into the cluster and take a look at the newly created mariadb namespace and resources. Just as described by the RHACM UI, you will be able to see the resources created by the Hub server.

<managed cluster console>$ oc get all -n mariadb

NAME READY STATUS RESTARTS AGE

pod/mariadb-1-deploy 0/1 Completed 0 7m34s

pod/mariadb-1-wcb27 1/1 Running 0 7m31sNAME DESIRED CURRENT READY AGE

replicationcontroller/mariadb-1 1 1 1 7m34sNAME REVISION DESIRED CURRENT TRIGGERED BY

deploymentconfig.apps.openshift.io/mariadb 1 1 1 config<managed cluster console>$ oc get secrets -n mariadb

NAME TYPE DATA AGE

...

mariadb Opaque 1 8m26s

Configuring Policies on Managed Clusters

To implement further control over resources in the managed cluster, we can create policies to manage security and configurations over large groups of clusters.

The policies will apply certain restrictions on top of the managed cluster. The restrictions can be related to management, like global quotas for all managed clusters, or security, like certificate policies that monitor the expiration date of a certain certificate in the managed clusters.

The policies can be used to enforce a certain configuration, or inform in case a managed cluster does not meet the requirements stated by the policy. For example, you would enforce a global qouta in the managed clusters, but you would only inform the administrator when a certificate is about to expire.

Let’s move forward with a demo —

In the next demo, I’m going to demonstrate how you can apply a certificate policy that will monitor whether OpenShift’s ingress controller certificate is going to expire anytime soon (under 100 days).

To deploy the RHACM policy on the managed cluster, some CRs (custom resources) need to be created on the hub cluster -

- Namespace — There is a need for a namespace for policies deployed by the Hub Openshift cluster. The namespace will contain the resources that represent the policies that are applied to the managed clusters.

- PlacementRule — Just like applications, a placement rule will be created to indicate which clusters will be affected by the defined policy. The PlacementRule will be applied only to clusters with the specified label.

- Policy — The Policy resource represents the policy itself. It sets the rules that will be applied, the severity, the namespaces that the policy applies to, and whether the rule needs to be enforced, or just inform the administrator if the cluster has drifted from the wanted state.

- PlacementBinding — The PlacementBinding resource is the connector between the Policy resource and the PlacementRule resource. It basically indicates which policy is going to be applied on which clusters.

All of the CRs need to be created on the hub cluster in order to successfully apply the policy tothe managed cluster. In this example, I will be using my preconfigured GitLab repository to deploy these resources.

Using the GitLab repository, I will create all of the resources mentioned above using a single YAML file on the HUB OpenShift cluster -

<hub cluster console>$ git clone https://gitlab.com/michael.kot/rhacm-policy.git<hub cluster console>$ oc create -f ./rhacm-policy/cert_expiration-policy.yaml

After creating the policy, browse from RHACM’s homepage to -> Governance, Risk, and Compliance -> policy-certificate. We will be able to see that we have 1 cluster which is not compliant with the specified policy!

To further investigate the dashboard, we can move to the Violations tab, and investigate the reason for the error.

Using the provided data, the security administrator in the organization can perform an update to the ingress router certificate. Pro-activly avoiding issues in the OpenShift cluster.

Conclusion

As stated in the beginning, moving to hybrid cloud became a popular approach. Everybody’s doing it. Everybody is choosing the services they want from every cloud provider, implementing these services in their complex applications.

Red Hat steps into the “hybrid” game as a big player, allowing OpenShift to run in every environment, whether its On-Premise, on a public cloud or in an edge, disconnected environment.

Now that many organizations have multiple OpenShift clusters as the main container engine for their applications, a new need rises — the need to manage these clusters from a single, unified management console — RHACM.

RHACM is amazing. It allows developers to deploy their applications in a much easier fashion. It allows system administrators to control every aspect of the organization’s infrastructure. And, it allows security administrators to apply security policies to monitor suspicious alerts in the managed clusters.

In the future, RHACM will provide much more — more visibility with Grafana, more automation with Ansible, and more platforms that will integrate with RHACM for easier administration.

Stay tuned!